This contest is the second edition of the SpaceNet building footprint detection challenge. Moving toward more accurate, fully automated extraction of buildings will help bring innovation to computer vision methodologies applied to high-resolution satellite imagery, and ultimately help create better maps where they are most needed. In this challenge, competitors are tasked with finding automated methods for extracting map-ready building footprints from high-resolution satellite imagery. This popular Marathon Match - sponsored by CosmiQ Works, DigitalGlobe, and NVIDIA - is challenging the Topcoder Community to develop automated methods for extracting building footprints from high-resolution satellite imagery.

Stay tuned for more updates on our progress regarding building and road pre-annotations.If the SpaceNet Challenge Round 2 were a basketball game, we would just be starting the fourth quarter. We also plan to implement a road detection algorithm to assist our users in road annotations. Yet we believe that our model will benefit from adding more data from different cities. Future roadmapĬurrently, we have a model that works fairly well on most city images we have. The arrows denote the different operations. White boxes represent copied feature maps. The x-y-size is provided at the lower left edge of the box. The number of channels is denoted on top of the box. Each blue box corresponds to a multi-channel feature map. U-net architecture (example for 32x32 pixels in the lowest resolution). We were able to achieve an IoU of 0.545 on the test set.

SPACENET 2 CODE

Our PyTorch code is open-sourced here with all the necessary instructions. We also added some augmentation, which helped the network adapt to images from cities not present in the training data. We did not use Khartoum city annotations due to lower quality of annotations. We merged the datasets of Vegas, Paris, and Shanghai and trained a single network on the whole data. We verified that adding the layer back does not result in any improvement. The winner of the Spacenet challenge used only 4 layers instead of 5 by removing the last layer with 1024 channels. U-Net is fast to train and has good performance even on relatively small datasets. It can be summarized as an encoder-decoder network, with skip connections between the corresponding layers of encoder and decoder. The winner of the second building detection challenge uses a segmentation algorithm called U-Net, then cuts the segmentation mask into building footprints. They host building and road detection challenges and open-source the best solutions. SpaceNet is a corpus of commercial satellite imagery and labeled training data to use for machine learning research. Our algorithm is based on the winning solution of Spacenet Building Detection.

Right: Choose “Building detection from aerial imagery” from the available model's list. Left: First, select images then click on the smart-prediction icon. 2: Steps to run smart predictions on vector projects on SuperAnnotate.

SPACENET 2 HOW TO

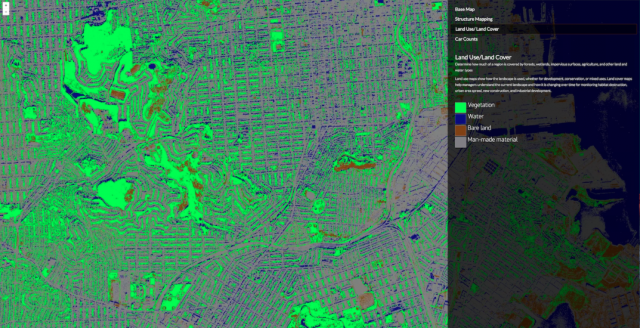

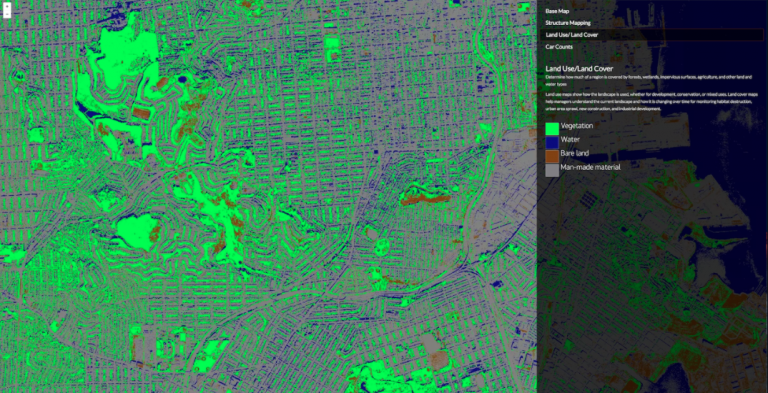

2 on how to get the auto-generated annotations on the SuperAnnotate vector projects. That in turn enables users to generate annotations of the same quality with less effort. As a part of that effort, several smart pre-annotation algorithms were integrated into the platform, allowing our users to fix the auto-generated annotations, instead of starting from scratch. Here at SuperAnnotate, we strive to use state-of-the-art computer vision technology to automate and accelerate the creation of pixel-perfect annotations. Motivation: Why did we create a building pre-annotation algorithmĪerial images annotation is tedious work, and annotating hundreds of thousands of buildings takes a lot of effort and funds. In this article, we would like to present our new building pre-annotation algorithms for aerial imagery, share its code and the motivation behind integrating it into our platform.